We shouldn’t have to take an oath or apply the principles of Occam’s razor[1] when providing data for others on which to base decisions; organisations today can’t afford to make decisions based on poor data or assumptions. If your business lacks confidence in the quality of your data, you’ll need to understand the root causes of the problems and fix them quick. It’s all about quality and confidence. A lack of trust in the quality of your data will have an impact on your credibility and user’s willingness to use your data. Of course, prevention is better than cure as it is well known that costs increase to fix problems the further down the line of usage that they are. Good data that is trusted will deliver value back to your organisation earlier.

To establish and maintain your credibility, you need to have data of good quality that users have confidence in. A good place to start fixing data problems is with:

Understanding your Meta-data

Meta-data is a set of details that define and describe the specifics about your data. They are essential to provide a true and correct understanding of your information and to provide a single meaningful source of definition of the data.

Identifying the origins of your data

You should always know where your data came from and the circumstances under which it was created. You should seek to resolve cases of multiple sources of the same data, is it the same? why has it been duplicated? which is the true origin? Making decisions on data with unknown origins introduces risk that needs to be eliminated.

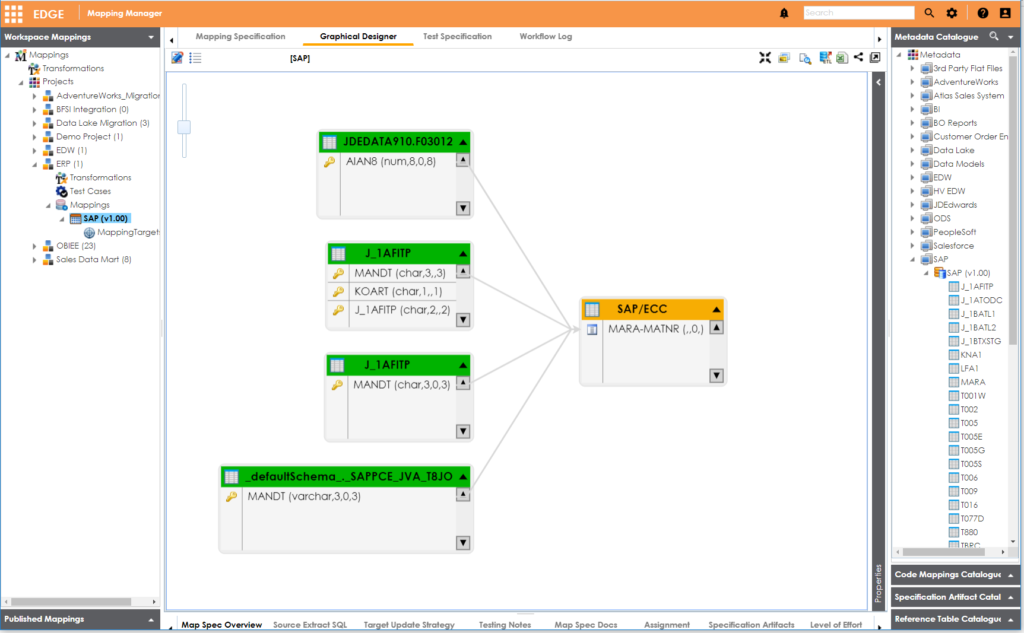

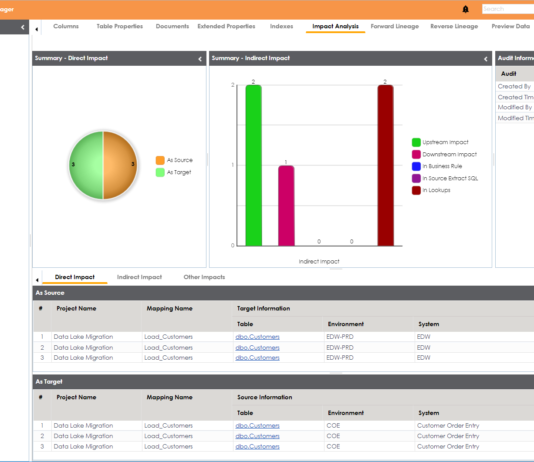

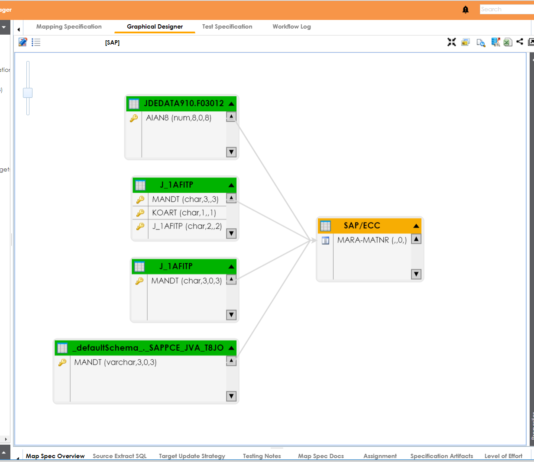

Defining the flow and lineage of data through data mappings

Data lineage is the process of unifying and mapping data fields from a source system to their related target system and understanding any transformations that take place during the points of integration of the systems. Understanding data lineage is essential for any operational and analytical processes; data migration, data integration, BI reports, and analyses.

Challenges with data mapping

However, it is not all plain sailing, there are challenges that need to be understood and addressed;

- Manual data mapping. This involves hand-coding the mappings between the data source and target database. Whilst this approach offers unlimited flexibility for unique mapping scenarios initially, it can eventually become challenging to maintain and scale as the mapping needs of the business grow.

- Inaccuracy. Any non-automated process has the potential to introduce errors due to incorrect assumptions or through simple operational mistakes during mapping. Inaccurate, duplicate, or otherwise decayed data can do more damage than having no data at all.

- Lost time. Manual verification of mappings to provide a greater level of confidence involves spending time double-checking and re-working scripts and schemas.

- Remaining current. As the requirements of the business change so do the requirements placed on the data. It becomes necessary to respond to the changes and it is preferable to have automated mechanisms that update the mappings on a regular or scheduled basis.

Semi-automated and fully automated systems provide the best approach to building and maintaining data mappings. These involve reverse engineering code to identify existing mappings or using combinations of lexical analysers or artificial intelligence systems to discover potential mappings.

There are many benefits of using a tool, such as erwin Mapping Manager, for automated data preparation and mapping such as human error removal, clarity and accuracy. You can read more on these here.

Conclusion

The cost of bad data quality can be counted in lost opportunities, bad decisions, and the time it takes to identify, cleanse, and correct erroneous data. Collaborative data management and the tools to correct errors at the point of origin are the clear ways to ensure data quality for everyone that needs it.

“Quality is more important than quantity” – Steve Jobs

Want to see erwin Mapping Manager in action? You can view our erwin Mapping Manager demo under the resources section of our website here or contact us for a personal demonstration.

__________________________________________________

[1] (A principle that states it is preferable to use the minimum number of assumptions that could provide plausible explanation for the given data and facts)